How To Optimize Your Images

The adage “a picture is worth a thousand words” holds true in the world of Internet marketing. The human brain is hard-wired to respond to images on a visceral level, meaning you’ll receive stronger visitor engagement by including them on your website. In fact, an infographic published at JeffBullas.com suggests that articles with images receive 94% more views than text-only articles. This week we will look at types of image formats and reveal the basics of online image optimization.

Image File Types

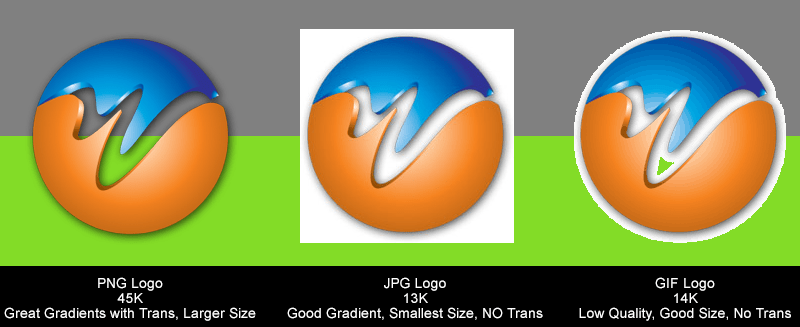

In the web world, three file formats are employed these days. The file formats are .jpg, .png, .gif. Although other image types are available, these three are all you need 99% of the time on websites. Let’s quickly look at when to use each and their advantages.

.JPG files are the most widely used images on the net. .JPG images when saved through a program like Photoshop, allow the greatest compression with the highest image quality retention. Compression is one of the biggest concerns when dealing with images. Smaller image file sizes allow for fast page load times, and Google loves pages that load quickly.

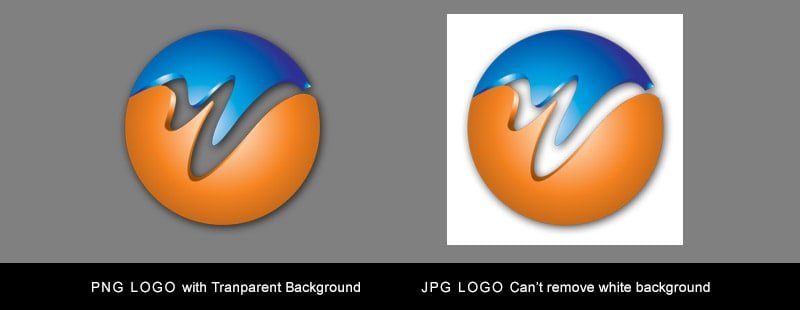

One drawback when using .JPG files are the inability to have a transparent background layer. Subsequently, you can not overlay an image on different colored web page backgrounds without seeing the background of the JPG file. This is where .PNG files come into play.

Properly prepared PNG files allow us to take an image, drop out the background and use this image regardless the web page background color. Further, PNG files have a larger color pallet than .JPG which helps reduce banding in particular image gradients.

.PNG files will have better overall image quality compared to a .JPG file but will come at the cost of larger files size.

.GIF files are rarely used in today’s websites but are worth mentioning. GIF files have smaller color pallets which reduce the ability to display smooth gradients. GIF files should only be used as a last resort and only when solid colors are in the image, never when gradients are present.

Use the following rules when choosing image files types and you will be good to go.

- Use JPG for photos and images containing non-banding gradients.

- Use JPG if you do not need to create a transparent background.

- Use PNG files when you need a to overlay an image on various background colors.

- Use PNG files when images contain banding in gradient area, but watch your file size.

- Use GIF files if an image has one or two solid colors without gradients and your file size restrictions require it over JPG file sizes.

Image File Size

Once you have an image edited, you need to reduce the file size before you upload it to your web server. Larger files translate into longer load times, and just a few large images can slow the entire web page down to a crawl. If you do not have access to a program such as Photoshop, there are different options to reduce the file size of images. One option is to use a tool like smush.it. Developed by Yahoo, this tool strips redundant code from images to make them smaller and more efficient without sacrificing quality.

Alt Text

Arguably the single most influential element of web-based images is alt text. You have to remember that search engines are unable to tell what images are (not yet at least), so they rely on signals like alt text. This element is used as a placeholder when a visitor’s browser is unable to load the image, or a user has turned off image loading in their browser. Go through your website’s images to ensure they each have unique and relevant alt text describing its content.

See below example of how to add alt text to an image:

<img src=”dog-jumping.jpg” alt=”Dog Jumping” />

File Name

Ask yourself which of these two file names looks more appealing:

9saf155fwf.jpg or dog-jumping.jpg?

It may not hold as much weight as alt text, but search engines (and visitors) pay attention to the file names of images. Putting forth the extra effort to use appropriate file names will help search engines rank them appropriately, which subsequently means more people will see them.

Resize Image Manually

Most content management systems (CMS) feature built-in editor tools that allow you to resize your images quickly. In WordPress for instance, you just click Add Media > Upload Files > Select Files > and Edit Image. Using this tool you can scale down your image, so it is smaller and more visually appealing on your website.

The problem with built-in resizing tools, however, is that visitors are still forced to download the original image (which is usually a much larger file size). By manually scaling down your images beforehand, you’ll reduce load times while promoting a more positive user experience.

Also, always include the height and width in your image source code. Not doing so forces the browser to spend time drawing the image.

<img src=”dog-jumping.jpg” height=150 width=400 alt=”Dog Jumping” />

5 Powerful Signals To Make Your Website More Trustworthy

Don’t underestimate the power of trust seals on a website. Whether it’s BBB or TLS/SSL encryption, seals create the impression of greater security, which means visitors are more likely to trust the site. It’s important to note that some seals require paid memberships or other criteria. Check the respective organization’s requirements to determine whether or not the seal is a good fit for your website.

Some of the different trust seals include the following:

- Better Business Bureau

- VeriSign

- McAfee Secure

- TRUSTe

- Norton Secured

- Thawte

- Trustwave

- Geotrust

- Comodo

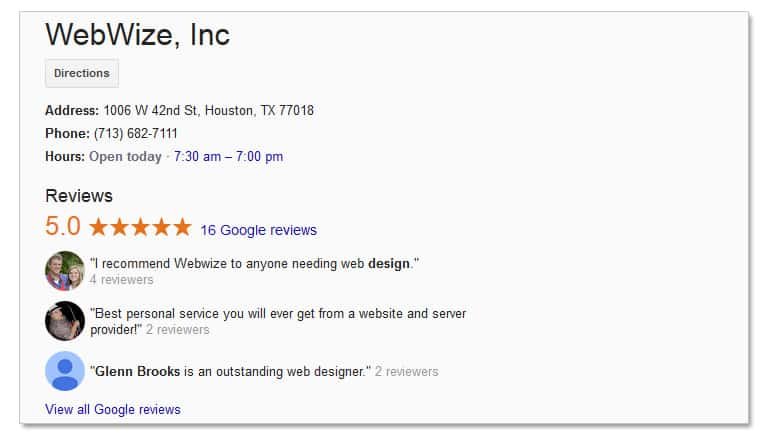

#2) Customer Reviews

There’s a reason why Amazon, Walmart.com and other major online retailers allow customers to publish reviews on their sites: because it instills a higher level of trust in visitors. Customer reviews create some degree of transparency which visitors feel more comfortable making purchases.

A study conducted by Search Engine Land found that 72% of consumers trust reviews published online as much as personal recommendations from friends and family members. That’s an eye-opening statistic which attests to the power of online reviews.

We discussed the importance of having a fast loading website in previous blog post, but it’s worth mentioning again. Load times directly affect whether or not visitors trust a site. Studies show that a typical user will wait an average of just 2 seconds before clicking the back button in his or her web browser. Web sites that take longer than 2 seconds to load are viewed as less trustworthy than faster sites.

4) Web Design

The overall design of a website will also impact whether or not visitors trust it. Mismatching color schemes, sloppy coding, hard to read text, pop-ups, and pop-unders are just a few elements that could send visitors heading out the door and to a competitor’s site. To create a more reliable website, use the latest web design trends such as HTML5 and Responsive Web Design (RWD), with a focus on a positive experience for the end-user.

#5) Social Media Stats

Webmasters should leverage the power of social media to make their sites more trustworthy. Ask yourself which website would you trust more; one that displays 10,000 or more Facebook likes or one that shows zero Facebook likes? Install a widget or app on your site to show its Facebook likes to visitors.

Facebook likes aren’t the only type of social signal that can be used as a trust signal on websites. Tweets and Google+ counts are also helpful in boosting a site’s trust level.

Pay-Per-Click Mistakes to Avoid

Broad Match Types

One of the most common “rookie” mistakes in PPC marketing is bidding on broad match keywords. Google Adwords offers three primary match types: broad, phrase and exact. Bidding on broad match keywords will result in your ads showing for the keyword, misspellings of the keyword, synonyms, related searches, and other relevant variations. On the other hand, phrase and exact match are better targeted and subsequently deliver higher quality traffic.

Overlooking Quality Score

Google introduced Adwords Quality Score back in 2005, with the goal of improving the overall quality of its ads. Each keyword is given a Quality Score ranging from 1 to 10 (10 being the highest quality). Having keywords with a high-quality score will improve your Ad Rank and lower your average CPC – two critical elements in a successful Adwords campaign.

Here are some of the factors known to impact a keyword’s Quality Score:

- Ad’s expected CTR

- Display URL’s past CTR

- Landing page relevance/quality

- Ad relevance to search keyword

- Geographic performance

- Targeted device performance

Grouping Search and Display Together

When setting up a Google Adwords campaign, you’ll have to choose whether to show your ads on the Search Network, Display Network, or both. The Search Network are search queries made on Google.com which consists of sponsored links on the top and right-hand side of the page. The Display Network consists of millions of third-party websites.

Don’t make the mistake of grouping Search and Display in the same campaign because the high-traffic, low-quality clicks from the Search Network will likely lower your overall Quality Score. A smarter approach is to split them into two campaigns allowing you to optimize each campaign to perform on its respective network.

1st Place Bidding Battles

It’s easy to allow your emotions to take over in the world of Internet marketing. When a direct competitor puts their ad above yours, you may jack up your bids just to “outdo” them. The first place ad is almost sure to receive the most traffic, but that doesn’t necessarily translate into a positive ROI – especially when bidding battles occur. Don’t worry too much about Ad Rank and whether your ad is sitting in first place for its target keyword, but instead focus on ROI.

Not Using Negative Keywords

If you aren’t using negative keywords in your PPC campaigns, you’re missing out on one of the easiest ways to improve the quality of your traffic while also raising your click through rate (CTR). If you run a business that sells virus protection software, you may want to include the negative keyword “free.” Doing so will prevent your ad from showing to users searching for “free virus scanner,” “free virus protection,” etc.

White Hat, Gray Hat, and Black Hat: What’s The Difference?

During your search for search engine optimization (SEO) tips and techniques, you’ll probably come across the term “black hat.”

Webmasters typically categorize their SEO and general traffic as either “white hat,” “gray hat” or “black hat.” White hat is usually given to SEO practices that follow the webmaster guidelines set forth by Google. This means no spam, no paid links, no automated software, and no link manipulation techniques. Gray hat refers to SEO practices that push the boundaries of what’s labeled acceptable by the search engines. It might not be spamming, but it could be reciprocal linking, content farms, or buying blog posts.

As opposed to the two other types, black hat SEO doesn’t have any limitations or follow any rules set forth. However, it’s important to understand that all of these terms – white hat, gray hat, and black hat – are created and used by the webmaster community. Search engines didn’t come up with these terms, and as such, there are no official criteria for defining which SEO techniques are considered white hat and which ones are considered black hat.

Generally speaking, black hat SEO could refer to both on-site and off-site optimization techniques used by website owners to help achieve higher search engine rankings. Some on-site techniques that could be considered black hat are keyword stuffing, invisible text and doorway pages. In the past, keyword stuffing and invisible text proved to be quite useful in boosting a website’s popularity/rank in the search engines; however, indexing algorithms have since caught onto this technique, showing it to be of little use today.

It’s important to remember that certain black hat SEO techniques can have an adverse impact on a website’s search engine ranking. Some people assume that a website’s rank is determined strictly by the number of backlinks it has, so they use automated software to crank out thousands of low-quality links on irrelevant pages. Being that Google is a multibillion-dollar tech company, however, it’s able to identify practices such as this, penalizing or even de-indexing the respecting website.

All of these “hat terms” being used to describe SEO techniques can be confusing, to say the least. Try not to think what’s white hat and what’s not, but instead look at the results.

Visit https://support.google.com/webmasters/answer/35769?hl=en for the complete list of Google’s Webmaster Guidelines. Familiarize yourself with these rules to ensure your blog or website isn’t the next victim of a Panda update.

Panda 4.1: An Overview

Webmasters should keep a close eye on their search rankings in the upcoming days because Google recently rolled out a new update to its ranking algorithm. Dubbed Panda 4.1, it’s designed to lower the rankings of websites with thin, irrelevant and duplicate content, while subsequently raising the rankings of high-quality internet sites.

Panda 4.1: What You Should Know

According to the folks at SearchEngineLand.com, this is the 27th Panda update. Google first announced Panda 1.0 back in 2011, saying that it had largely improved the rankings for a high-quality website.

“In recent months we’ve been especially focused on helping people find high-quality sites in Google’s search results. The ‘Panda’ algorithm change has improved rankings for a large number of high-quality websites, so most of you reading have nothing to be concerned about,” wrote Google in a 2011 blog post confirming the existence of Panda.

Panda 4.1 follows in the footsteps of its predecessor by actively identifying “signals” of low-quality websites and then lowering their rankings. It’s in Google’s best interest to deliver the most relevant results to its users; therefore, it must always tweak and optimize its algorithm to distinguish between low-quality and high-quality websites. The Panda update is one of the many tools it uses to accomplish this task.

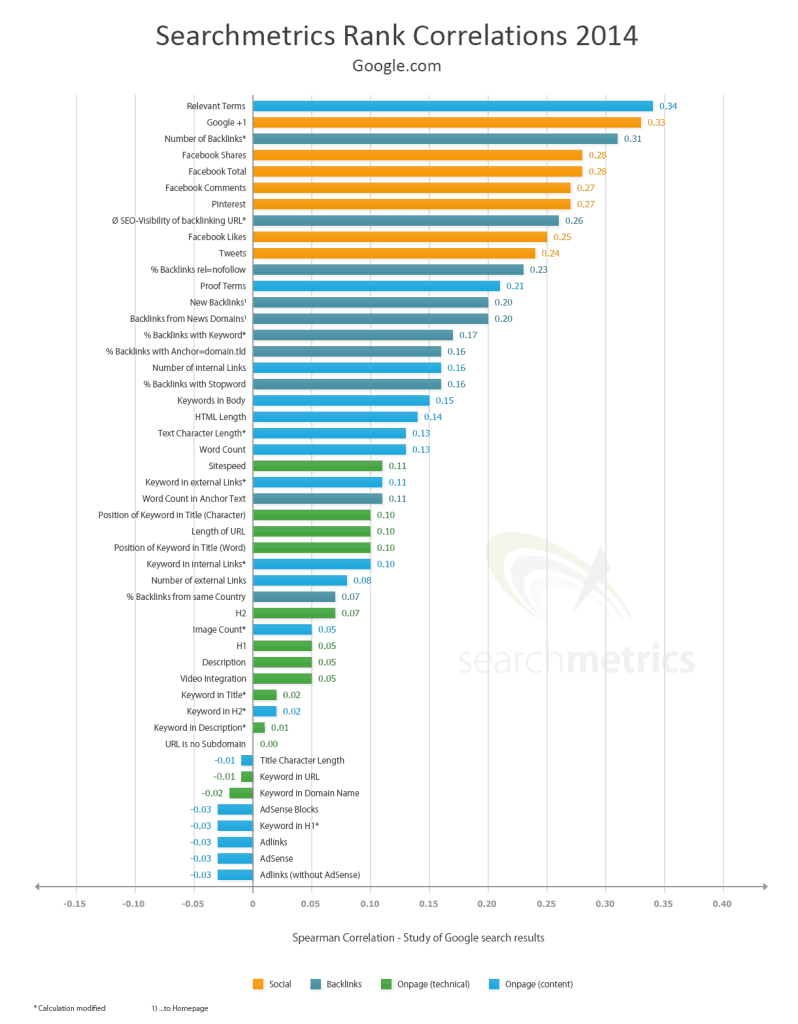

SearchMetrics.com conducted a study to identify the winners and losers of the Panda 4.1. Some of the websites hit the hardest by Google’s latest algorithm update includes Yellow.com (-79% search engine visibility), SimilarSites.com (-79%), Free-Coloring-Pages.com (-79%), Dwyer-Inst.com (-79%), and IsSiteDownRightNow.com (-74%).

Each time a website’s ranking drops in the search results, however, another site takes its place. SearchMetrics.com found the winners of Panda 4.1 to be ComDotGame.com (+1354%), HongKiat.com (+406%), Babble.com (+379%), Rd.com (+315%, and MediaMass.net (+308%).

Safeguarding Your Website From Panda 4.1

It’s important to note that just a few pages of poor quality content can adversely affect the entire site’s ranking. Webmasters should get into the habit of auditing their websites on a regular basis to identify under-performing pages. Pages with low-quality, duplicate or cookie-cutter content should either be removed or improved to protect the website from Panda 4.1’s wrath. If you have multiple pages with similar content, for instance, try consolidating them together. Doing so will promote a better experience for the end user, and it will encourage Google to rank the site higher in the SERPs.

The key thing to remember is that Panda 4.1 – like all previous Panda updates – focuses on content. Web sites that deliver relevantly, high-quality content will reap the benefits of higher search rankings, whereas websites with low-quality content will likely receive lower rankings. Go back over content to ensure it’s factual, unique, trustworthy, engaging, and contains perfect or near-perfect grammar.

Or Contact WebWize At 713-416-7111

Before making a final decision on a Web Design Company, spend a few minutes on the phone with us.

About Glenn Brooks

Glenn Brooks is the founder of WebWize, Inc. WebWize has provided web design, development, hosting, SEO and email services since 1994. Glenn graduated from SWTSU with a degree in Commercial Art and worked in the advertising, marketing, and printing industries for 18 years before starting WebWize.

Thanks for the practical advice on optimizing images – especially when to use jpg vs. png.

You are welcome Jack. If you have any questions regarding images, just let me know.